Artificial neural networks are everywhere in research and technology, as well as in everyday technologies such as speech recognition. Despite this, it is still unclear to researchers what is exactly going on deep down in these networks. To find out, researchers at the Göttingen Campus Institute for Dynamics of Biological Networks (CIDBN) at Göttingen University, and the Max Planck Institute for Dynamics and Self-Organisation (MPI-DS) have carried out an information-theoretic analysis of Deep Learning, a special form of machine learning. They realised that information is represented in a less complex way the more it is processed. Furthermore, they observed training effects: the more often a network is "trained" with certain data, the fewer “neurons” are needed to process the information at the same time. The results were published in Transactions on Machine Learning Research.

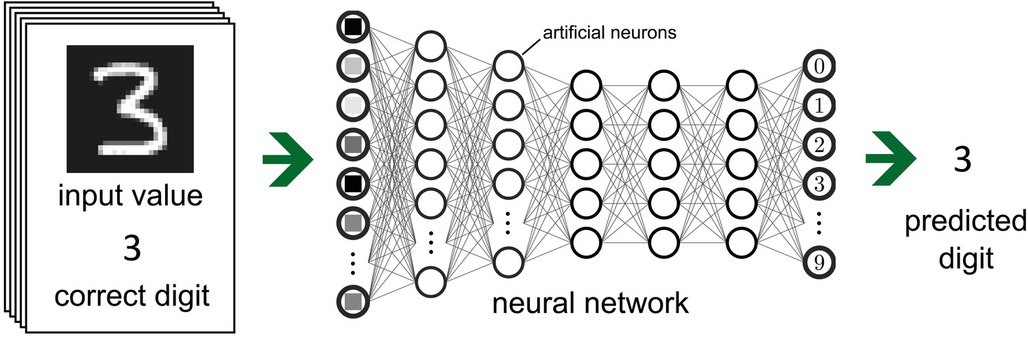

Artificial neural networks of the Deep Neural Network type are composed of numerous layers, each consisting of artificial neurons. The networks are informed by the way the cerebral cortex works. They must first learn to recognise and then generalise patterns. To do this, they are trained with data. For their study, the researchers used images of handwritten numbers that the network was supposed to recognise correctly. The principle is simple: an image is read by the input layer. Then, one by one, the intermediate layers take in the contents of the image, distributing the information among the artificial neurons. Ideally, at the end, the output layer delivers the correct result.

The researchers used a novel technique known as Partial Information Decomposition to determine how the input values are transformed in the intermediate layers. In this method, the information is broken down into its individual parts. This reveals how the artificial neurons divide up the processing: does each neuron specialise in individual aspects of the information? Is there a lot of redundancy or more synergy?

"The further we move towards the output layer in the network, the fewer neurons the information is distributed across. The neurons become specialised. The representation of the information becomes less complex with processing and thus easier to read," explains David Ehrlich from CIDBN. Also, as training progresses, the number of neurons involved in coding the information decreases. Consequently, training contributes to a decrease in complexity during processing.

"The most significant part of this new finding is that we now have insights into the information structure and functioning of each intermediate layer. So, we can watch the information processing in artificial neural networks layer by layer – and even during the learning process," says Andreas Schneider from MPI-DS. "This offers a new starting point for improving deep neural networks. These networks are used in critical areas such as driverless cars and face recognition so it is crucial to avoid errors. To do this, it is important to understand the inner workings of these networks in detail," the researchers conclude.

Original publication: Ehrlich, D. A. et al: A Measure of the Complexity of Neural Representations based on Partial Information Decomposition. Transactions on Machine Learning Research (2023). Full text available here: https://openreview.net/pdf?id=R8TU3pfzFr

Contact:

Dr Britta Korkowsky

University of Göttingen

Göttingen Campus Institut for Dynamics of Biological Networks (CIDBN)

Heinrich Düker Weg 12, 37073 Göttingen, Germany

Tel: +49 (0)551 39-26675

Mail: cidbn@uni-goettingen.de

Dr Manuel Maidorn

Press Officer

Max Planck Institute for Dynamics und Self-organization (MPI-DS)

Am Faßberg 17, 37077 Göttingen, Germany

Tel: +49 (0)551 5176-668

Mail: presse(at)ds.mpg.de